[Most Recent Entries] [Calendar View]

Thursday, November 19th, 2015

| Time | Event | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| 1:15a | AMD Launches Star Wars Battlefront Game Bundle For Radeon R9 Fury Buying new graphics cards is always fun. Finding game deals while shopping for new graphics cards is even better. This time around AMD is bundling the newly released Star Wars Battlefront with their Radeon R9 Fury that we saw released last July. Those who have been looking for a new R9 Fury, perhaps to enjoy some new games this season, will definitely want to double check everything so that they can take advantage of this promotion and add Star Wars Battlefront to their to do list. This promotion will be running from November 17, 2015 to January 31, 2016. Star Wars Battlefront game keys will be provided while supplies last and any game codes received must be redeemed before February 29, 2016. Note that only the AMD Radeon R9 Fury will be eligible for the Star Wars Battlefront bundle, meaning that the game will not be included with either the R9 Fury X or R9 Fury Nano. It’s unusual to see a bundle only apply to one card in this fashion, but looking at current GPU prices it would appear that this is specifically meant to be AMD’s counter to the GeForce GTX 980 for the holiday season, using the bundle to justify the R9 Fury’s higher average price and to offset NVIDIA’s own game bundle.

Meanwhile, though this isn’t specifically an AMD Gaming Evolved game, in their announcement AMD notes that they have been working with both EA and DICE to ensure that Star Wars Battlefront performs well on Radeon GPU's. Though some of these technologies have been in past Frostbite games, Battlefront includes the latest iterations of DICE’s physically based rendering, displacement mapped terrain tessellation, and compute shader ambient occlusion routines. Those and other features have led to what I think is a great showcase of modern graphics technology, though performance relative to the competition will have to wait until the public has had more time with the game. For more information and participating vendors please visit the promotions website. | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

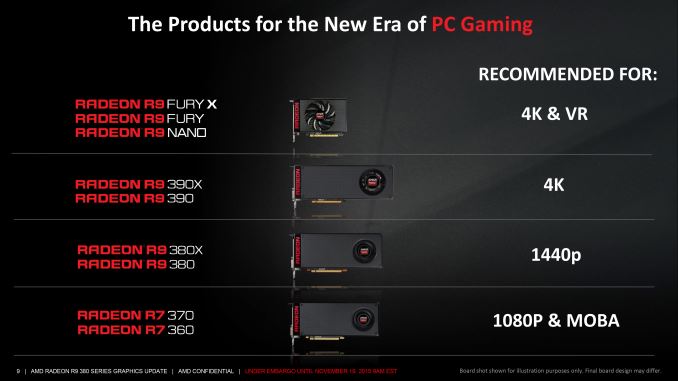

| 9:01a | AMD Launches Radeon R9 380X: Full-Featured Tonga at $229 for the Holidays Back in September of 2014 AMD released their first Graphics Core Next 1.2 GPU, Tonga, which was the GPU at the heart of the Radeon R9 285. For all intents and purposes Tonga was the modern successor to AMD’s original GCN GPU, Tahiti, packing in the same 32 CUs and 32 ROPs, while other features such as color compression allowed AMD to trim the memory bus to 256-bits wide without a performance hit. With Tahiti slowly going out of date from a feature perspective, Tonga was an interesting and unprecedented mid-cycle refresh of a GPU. However in the 14 months since the launch of the first Tonga product AMD has never released a fully enabled desktop SKU, until now. Radeon R9 285 utilized a partially disabled Tonga – only 28 of 32 CUs were enabled – and while it was refreshed as the Radeon R9 380 as part of the Radeon 300 series launch, a fully enabled version of Tonga only showed up in mobile, where in the form of the R9 M295X it was used in the 27” iMac. In its place AMD continued selling the Tahiti based Radeon R9 280 series for much longer than we would have expected, leading to an atypical situation for AMD where a card using the fully enabled GPU is only now showing up over a year later. In some ways Radeon R9 380X is a card we were starting to think we’d never see. But at last a full-featured Tonga is here as the heart of AMD’s latest video card, the Radeon R9 380X. AMD is launching the 380X at this time to setup their product stack for the holidays, looking to shake up the market shortly before Black Friday and dig out a spot in the gap between NVIDIA’s GeForce GTX 970 and GTX 960 cards. By hitting NVIDIA a bit above the ever-popular $200 spot, AMD is aiming to edge out NVIDIA on price/performance while also snagging gamers looking to upgrade from circa 2012 video cards.

Starting as always from a specification comparison, the R9 380X is going to be a very straightforward card. Rather than R9 380’s 28 CUs, all 32 CUs are enabled for R9 380X. As this was the only thing disabled on R9 380, this means that the increased stream processors and texture resources are the only material GPU change as opposed to the R9 380. Otherwise we’re still looking at the same 32 ROPs backed by a 256-bit memory bus, all clocked at 970MHz. Meanwhile as far as memory goes, the R9 380X sees AMD raise the default memory configuration from 2GB for the R9 380 to 4GB for this card. We’ve reached the point where 2GB cards are struggling even at 1080p – thanks in large part to the consoles and their 8GB of shared memory – so to see 4GB as the base configuration is a welcome change. R9 380 did offer both 2GB and 4GB, but as one might expect, 2GB was (and still is) the more common SKU that for better or worse makes R9 380X stand apart from its older sibling even more. Otherwise the 5.7Gbps memory clockspeed of the R9 380X is a slight bump from 5.5Gbps of the 2GB R9 380, though it should be noted that 5.7Gbps was also the minimum for the 4GB R9 380 SKUs. So in practice just as how there’s no increase in the GPU clockspeed, there’s no increase in the memory clockspeed (or bandwidth) with 4GB cards. Similarly, from a power perspective the R9 380X’s typical board power remains unchanged at 190W. In practice it will be slightly higher thanks to the enabled CUs, but otherwise AMD hasn’t made any significant changes to shift it one way or another. From a performance perspective then the R9 380X is not going to be a very exciting card. After 3 releases of the fully enabled Tahiti GPU – Radeon 7970, 7970 GHz Edition, and R9 280X – the architectural and clockspeed similarities of R9 380X mean that it’s essentially a fourth revision of this product. Which is to say that you’re looking at performance a percent or two better than the 7970, well-tread territory at this point. The R9 380X’s principle reason to exist at this point is to allow AMD to refresh their lineup by tapping the rest of Tonga’s GPU performance, both to have something new to close out the rest of the year and to give them a card that can sit solidly between NVIDIA’s GeForce GTX 970 and GTX 960. That AMD is launching it now is somewhat arbitrary – we haven’t seen anything new in the $200 to $500 range since the GTX 960 launched in January and AMD could have launched it at any time since – and along those lines AMD tells us that they haven’t seen a need to launch this part until now. With the R9 380 otherwise shoring up the $199 price point until more recently, there’s always a trade-off to be had with having better positioning than the competition versus having too many products in your line (with the 300 + Fury series the tally is now 9 cards). In AMD’s new lineup the R9 380X will slot in between AMD’s more expensive R9 390 and the cheaper R9 380. AMD is promoting this card as an entry-level card for 2560x1440 gaming, though with the more strenuous games released in the last 6 months that is going to require some quality compromises to achieve. As it stands I’d consider the 390 more of a 1440p card, while the R9 380X is better positioned as AMD’s strongest 1080p card; only in the most demanding games should the R9 380X face any real challenge.

As far as performance goes then, the R9 380X is about 10% faster than the 2GB R9 380 at 1080p, with the card taking a much more significant advantage in games where 2GB cards are memory bottlenecked. Otherwise the performance is almost exactly on-par with the 7970 and its variants, while the more powerful R9 390 has a sizable 43% performance advantage thanks to its greater CU count, memory bandwidth, and ROPs. This makes the R9 390 a bit of a spoiler on value, though its $290+ price tag ultimately puts it in its own class. Or to throw in a quick generational comparsion to AMD's original $250 GCN card, Radoen HD 7850, you're looking at a 75% increase in performance at this price bracket over 3 years. Today’s launch of the R9 380X is a hard launch, with multiple board partners launching cards today. For most of the partners they will be reusing their R9 380 designs, which is fitting given the similarities between the two cards. Expect to see a significant number of factory overclocked cards, as Tonga has some headroom for the partners to play with. OC cards will start at $239 – a $10 premium – while the card we’ve been sampled from AMD, ASUS’s STRIX R9 380X OC, will retail for $259. As for the competition, as I previously mentioned AMD will be slotting in between the GeForce GTX 970 and GTX 960. The former is going to be quite a bit faster but also quite a bit more expensive, while the R9 380X will handily best the 2GB GTX 960, albeit with a price premium of its own. At this point it’s safe to say that AMD holds a distinct edge on performance for the price, as they often do, though as has been the case all this generation they aren’t going to match NVIDIA’s power efficiency. Finally, on a housekeeping note we’ll be back on Monday with a review of the ASUS STRIX R9 380X alongside a look at performance at reference clocks. We’ve only had the card and AMD’s launch drivers since the beginning of this week and there is still some work to be done before we can publish our review, so stay tuned.

| |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| 2:45p | SuperComputing 15: Intel’s Knights Landing / Xeon Phi Silicon on Display There are lots of stories to tell from the SuperComputing 15 conference here in Austin, but a clear overriding theme – in order to reach ‘Exascale’ (the fancy name given to where a supercomputer hits one ExaFLOP, 1018 FLOPS, in LINPACK), PCIe co-processors and accelerators are going to be a vital aspect in that. Looking at the Top500 supercomputers list that measures supercomputers in pure FLOPS, or the Green/Graph 500 lists that focus on FLOPS/watt and graph compute performance respectively (graph compute such as social networking linked lists of users (nodes) and relationships (edges)), it is clear that focused silicon is key to get peak performance and performance per watt. That focused silicon is often around NVIDIA’s Tesla high performance computing cards, FPGAs (field programmable gate arrays) from Xilinx or Altera, focused architecture designs such as PEZY, or Intel’s Xeon Phi co-processing cards. We’ve reported on Xeon Phi before, regarding the initial launch of the first generation Knights Corner (KNC) with 6GB, 8GB or 16GB of onboard memory. These parts are listed on Intel's ARK at $1700 to $4150 (most likely less for bulk orders) but KNC forms the backbone of the compute behind the world’s number 1 supercomputer, the Tianhe-2 in China. Over the course of SC15, more details have emerged about the 2nd generation, Knights Landing (KNL) regarding availability and memory configuration, using up to 16GB of onboard high-bandwidth memory using a custom protocol over Micron’s HMC technology and eight onboard HMC memory controllers. As part of a briefing at SC15, Intel had a KNL wafer on hand to show off. From this image, we can see about 9.4 dies horizontally and 14 dies vertically, suggesting a die size (give or take) of 31.9 mm x 21.4mm, or ~683 mm2. This die size comes in over what we were expecting, but comes in line with other predictions about the route of the first gen, Knights Corner, at least. Relating this to transistor counts, we have a differing story of Charlie Wuischpard (VP of Intel’s Data Center Group) stated 8 billion transistors to us at the briefing but there are reports of Diane Bryant (SVP / GM, Data Center Group) stated 7.1 billion at an Intel Nov ’14 investor briefing, but we can only find one report of the latter. This would come down to the wobbly metric of 10.4-11.7 million transistors per square millimeter. The interesting element about KNL is that where the 1st generation KNC was only available as a PCIe add-in coprocessor card, KNL can either be the main processor on a compute node, or as a co-processor in a PCIe slot. Typically Xeon Phi has an internal OS to access the hardware, but with this new model it eliminates the need for a host node – placing a KNL in a socket will give it access to both 16GB of high speed memory (the MCDRAM) as well as six memory channels for up to 384GB of DDR4, at the expense of the Intel Omni-Path controller. The KNL will also have 36 PCIe lanes which can host two more KNC co-processor cards and another four for other purposes. As you might expect, due to the differences we end up with the same die on different packages – one as a processor (top) and one as a co-processor which uses an internal connector for both data and Omnipath. Given the size of the die and the orientation of the cores in the slide above (we can confirm based on the die that it’s a 7x6 arrangement taking into account memory controllers and other IO), the fact that the heatspreader over the package is in a non-rectangular shape is due to the MCDRAM / custom-HMC high speed memory. If we look into the side, the headspreader clearly does not go down onto the package all the way around, in order to deal with the on-package memory. The connector end of the co-processor has an additional chip under the heatspreader, which is most likely an Intel Omni-Path connector or a fabric adaptor for adjustable designs. This will go into a PCIe card, cooler applied and then sold. The rear of the processor is essentially the same for both. When it comes to actually putting it on a motherboard, along with the six channels of DDR4, we saw Intel’s reference board on the show floor as a representation on how to do it. A couple of companies had it on display, such as Microway, but this is a base board for others such as Supermicro or Tyan to build on or provide different functionality: The board is longer than ATX, but thinner. I can imagine seeing one of the future variants of this in a half-width 1U blade with all six channels and two add-in boards, pretty easily. This might give six KNL in a 1U implementation, as long as you can get rid of the heat. The socket was fairly elaborate, and it would come across that Intel has a high specification on specific pressure for heatsinks . But it is worth noting that the board design does not have an Omni-Path connection, and thus for a server rack then either additional KNLs should be added or PCIe Omni-Path cards need to be added. But this setup should help users wanting to exploit Xeon Phi in a single 1P node and MCDRAM, running the OS on the Xeon Phi itself without MPI commands to use extra nodes. There seems to be a lot of the HPC community here at SC15 who are super excited about KNL. Regarding availability of Knights Landing, we were told that Intel has made sales for installs in Q4, but commercial availability will be in Q1. We were also told to expect parts without MCDRAM as well. Additional: On a run through the SC15 hall, I caught this gem from SGI. It's a dual socket motherboard, but because KNL processors have no QPI links and can only in 1P systems, this would be equivalent of two KNL nodes in one physical board. Unfortunately they had a plastic screen in front, which distorts the images. | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| 8:45p | NVIDIA Releases 359.00 WHQL Game Ready Driver The season's flurry of games has brought along a blizzard of updates. Bad pun tie-ins aside, there is little that tarnishes a shiny new game like musty old drivers, and once again NVIDIA strives to keep up with the holiday rush with another new driver release. NVIDIA's latest 359.00 WHQL drivers bring us game ready support for the newly released Assassin's Creed Syndicate, which is part of their Bullets or Blades bundle that is still running. Also receiving the game ready treatment is Blizzard's Overwatch beta that begins this Friday and will be running through this weekend. Alongside those two games the also recently released Star Wars: Battlefront is receiving some additional performance optimizations post-release. Outside of game performance the version 359.00 WHQL driver also brings driver support for GameWorks VR 1.0 which aims to provide performance optimizations to virtual reality users. Also while there are no issue fixes for windows 10 users Windows 7 users did get an SLI profile update for Guild Wars 2. Anyone interested can download the updated drivers through GeForce Experience or on the NVIDIA driver download page. | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| << Previous Day |

2015/11/19 [Calendar] |

Next Day >> |